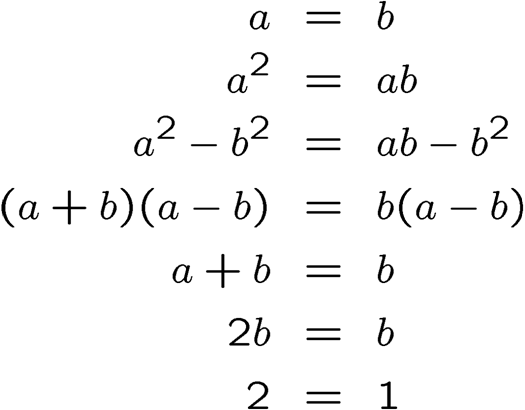

Via StumbleUpon, I came across this short text page which lists three mathematical ‘proofs’ which seem to violate common sense, listed below. The first is:

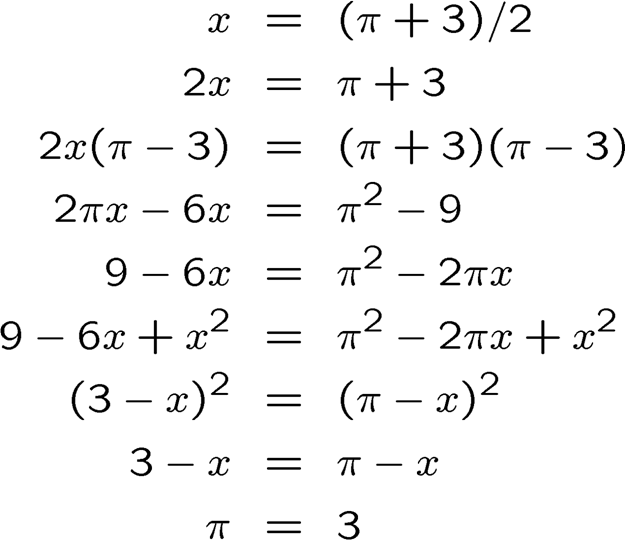

The second one is:

The third one is:

Each of these proofs is (intentionally) wrong! They highlight classic fallacies in mathematical thinking. See if you can figure out where, in each of them, the proof goes wrong, and then look for the answers below the fold…

(Note: the third proof involves the ‘imaginary number’ . If you’re not familiar with it, you can safely skip that problem, as it is closely related to one of the others.)

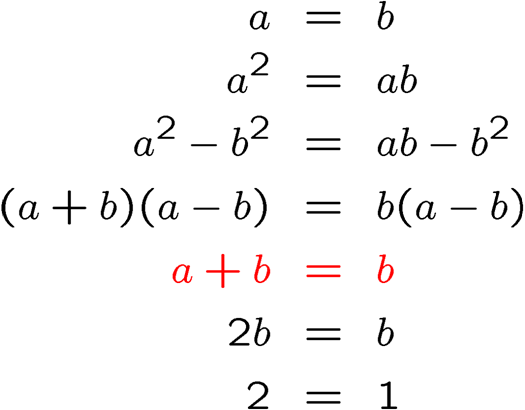

The mistake in the first proof is highlighted in red below:

The mistake lies in the assumption that we can divide by . Why is this a problem? Because, according to the equation we started with,

! We are not allowed to divide by zero, and therefore the result highlighted in red is invalid.

The algebra ‘muddies the waters’ significantly, and it can be helpful to see the same proof, but with . The first two steps suggest that

. The third step suggests that

. The fourth step suggests that

. We run into problems in the next step, in trying to divide both sides of this equation by zero!

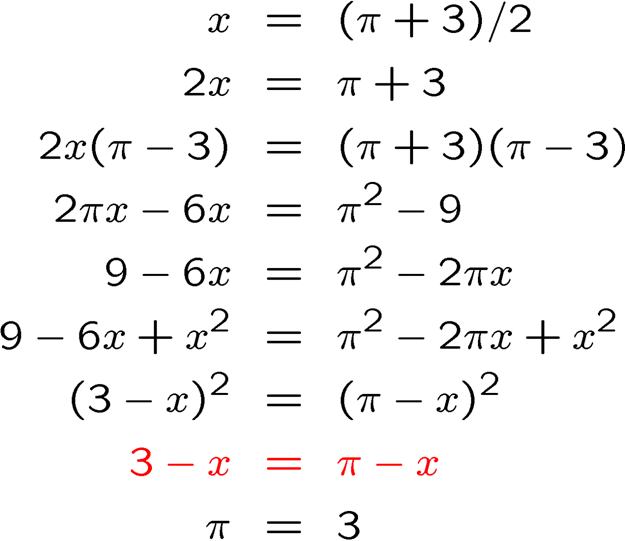

The next ‘proof’ is a little more subtle. Again, we highlight the problem step in red:

The problem here is that, in order to get to the step in red, there is an implicit square root taken of both sides of the equation. However, there are potentially two roots to any square; e.g. has possible solutions

or

. The ‘proof’ above assumes that the positive root is correct, which leads to the erroneous answer

. If one looks at the negative root, one finds that

, which leads right back to the starting equation

. This proof, incidentally, might have been helpful to legislators in Indiana back in the late 1900s 1800s.

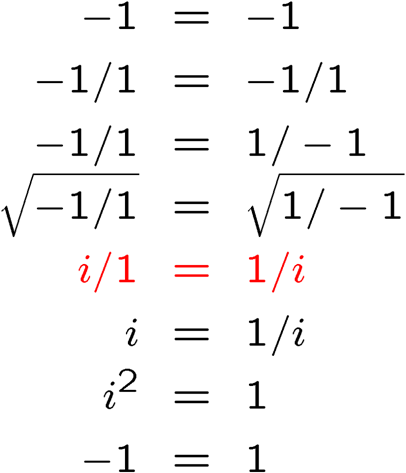

The third ‘proof’ suffers from essentially the same problem as the second, except that complex numbers are involved:

The step highlighted in red assumes that we break up the square roots as , and then take individually the positive root of each term. It is wrong, however, to assume that the positive roots are always the correct ones when dealing with the square root of an equation. If we have an equation of the form

,

we can at best say that , and not necessarily both are true. Nothing changes if we take the square root of an equation of the form

,

in which case we can at best conclude that

.

For our ‘proof’ above, we find that the statement in red should be replaced with “ or

, but not necessarily both.”

There’s a nice classic book that covers such mathematical errors, and other more important mathematical paradoxes: Bryan Bunch, Mathematical Fallacies and Paradoxes (Dover, New York, 1997).

If you really want to be certain that you understand such mistakes, try writing your own ‘proof’ for !Feel free to post yours in the comments. I’ll add my own ‘proof’ at a later date!

Tedd Chiang wrote a really lovely speculative fiction story entitled “Division by Zero”. There is a copy of dubious legality available here. I recommend buying his short story compilation Stories of Your Life and Others. Spring for the hard back copy. This book is a keeper, and the design of the paperback version is inexplicably hideous.

NOOOOO!!!!!! Not math!!! Although solving proofs was one of the few things I enjoyed when I was taking high school math, I’ve forgotten just about all of it. I’m going back to doing banking on my Excel spreadsheets. Thanks…

These are really about the special cases of definitions. That is the curse of proofs and programs alike. You have to look at form and values together so I guess that makes them hard?

Thanks for the explanations. i was sick these type of things, tried to explain, but that is good.

Ya, thanks for the Details. I been tired of these getting passed around the internet. It known that they are math illusions, but having the details of were they are incorrect is great.

With the third problem. If you accept the square root as worked out . To solve 1/i you have to multipy the top and the bottom by the modulus of the bottom i.e -i which would leave you with -i/1 which when squared would give you isquared as on the other side. If I am not mistaken. Not that I know a lot, but I like maths…

This is very interesting. Spotting math errors come into handy in my own work, which questions mainstream assumptions, both in science and mathematics.

I find that mainstream scholars and “experts” are much more likely to commit subtle errors.

NS

http://sciencedefeated.wordpress.com/

The problem with some of these “proofs” is you cannot prove that one number equals another using algebra. The whole point of algebra is solving for a variable.

I was helping an algebra one student with his homework the other day and found that when there was not a variable in the end they were taught to put unsolvable because you cannot solve for anything using algebraic means.

These are more of philosophic problems. A bunch of math geeks or philosophy majors trying to show off that the world is chaotic and not the same as we know it.

Thanks for the unproofs though…

Thanks for the laugh, was so freaking funny 🙂

I think you mean “late 19th century”, not “late 1900s”. 🙂

Jacob: Whoops! You’re right! I’ve touched it up in the post.

que pouco sentidiño hai!!!

Very effective explanation.

Divide by zero and taking only the positive value of a square root can fox many.

By the way, I discovered you blog through “stumble”. So the loop is complete.

Cheers!

PS: If you wish to read a real good book on math, I would recommend “Journey Through Genius (link in my blog!)

nice explanation… sick of getting stupid msgs frm friends ( aspiring engineers… ) and explaining them…

y=100 z=0

x = (y+z)/2

2x = y+z

2x(y-z) = (y+z)(y-z)

2xy-2xz = y^2 – z^2

z^2-2xz = y^2 – 2xy

z^2-2xz + x^2 = y^2 – 2xy +x^2

(z-x)^2 = (y-x)^2

z-x = y-x

z = y

0 = 100

There.

Nice “demonstration” Shaun. This is quite convincing, in a misleading way.

Very interesting articles .It’s fun about math!!

for shaun’s question

(z-x)^2 = (y-x)^2

z-x = y-x

///

when you square root the answer, there are 2 possible solutions for each side +/-.. it might be :

z-x = y-x OR

x-z = x-y

this might cause discrepancy in the answer

(very similar to question 2 as posted by the author)

I just skimmed this, but I think you meant to write:

z-x = x-y OR z-x = y-x

as the two you write are equivalent to multiplying both sides by -1.

lobster have right. Shaun the awnsers are:

(z-x)^2 = (y-x)^2

z-x = y-x

z = y

0 = 100

or

(z-x)^2 = (y-x)^2

x-z = y-x

50 – 0 = 100 – 50

50 = 50 then….you sux

oh sorry lobster took bad anwser, my is right:P because form hiw anwser is

x-z = z-y

50 = -50

No need to demean Shaun. He was just doing what skulls suggested at the end. Though it would be nice if somebody could come up with a different false proof that looks realistic (as in, one that used a different error besides the two shown).

integral( tgx dx ) =

integral( sinx * 1/cosx dx ) =

integration by parts: | u’ = sinx dx u = -cosx, v = 1/cosx v’ = (sinx dx)/cos^2x | =

-1 + integral(sinx/cosx dx) =

-1 + integral( tgx dx )

finally:

integral( tgx dx ) = -1 + integral( tgx dx )

0 = -1

quermit: Nicely done! You’ve got a future in ‘bad’ math! 🙂

POZDRAWIAM POLSKE I WYKOP.PL 😛

I found all of them 😉 Now I have circles in coordinate system on math.

Również pozdrawiam wykop.pl i Polske ;D

Good Job 🙂

Pozdrowienia dla wykop.pl

Matthew,

I think you are just a dumbass jealous of those that can understand basic math. Go to school and stop ‘dreaming’, math doesn’t try to show a chaotic world, it is YOU that think on it that way. Math is just math.

What happened is that: you cannot understand clearly math and goes to ‘philosophy’ to try to explain. Next you are going to say that a god wanted it to be that way…

Those are fake ‘proofs’ and here was shown why they are wrong (and by the way, it is not that difficult to spot such errors).

quermit,

I know where is the point of your ‘proof’ =)

When you do integration by parts, you come from the supposition that (uv)’ = u’v + uv’

if you do u = -cosx and v = 1/cosx

(u*v)’ = 0

the error comes when you do the opposite passage in integration of both sides, creating solutions that do not match, (in integration by parts is done this integration implicitly)

and therefore creating that fake proof. ^^

deeply, the same kind of mistake commited in the more simple algebric problems demonstrated, assumpting a ‘probable’ solution that is not the correct ^^

I dont know if you posted having it in mind, but I have output it anyway…

========

I will post a similar problem for demonstrating how that kind of mistake can be generated in integration;

integral((e^x dx)/2)

| assumpt u = e^(x/2) , v’ = (e^(x/2))/2

u’ = (e^(x/2))/2 , v = e^(x/2) |

then,

integral((e^x dx)/2) = e^x – integral((e^x dx)/2)

e^x = integral(e^x dx)

That is not true always. Note that integral(e^x dx) = e^x + constant, so that is only true if constant = 0 . This is an example of how false solutions are generated through integration by parts.

=======

Mostly, i believe that kind of error occurs if:

(u*v)” = (u*v)’ (not sure of it, i have to proof yet… but seemed to be it. Damn, i am not even a mathematic, wth am I doing here??!?!?)

Zhar, you are not mathematic or mathematician ? 😉

pitch

well, sorry for the error,

english is not my main language.

I am not a MATHEMATICIAN.

What??!!!! No Asymptotes???!!!!!

Ok try this one:

x=1+1+1+…+1(x ones)

x^2=x+x+…+x (multiply both side by x)

2x=1+1+…+1 (Differentiate both side)

2x=x (d 1st assumtion)

2=1 !??

Hav fun 🙂

glubot

x=1+1+1+…+1(x ones)

2x=1+1+…+1 (Differentiate both side)

Nobody said that number of 1s is equal in both statemens.

More, we can’t compare infinites, infinite isn’t a number

oh no =))))))

my fault, there is nothing infinite =))))

x=1+1+1+…+1(x ones) = constant

x^2=x+x+…+x (multiply both side by x) =

=(1+1+..+1)+(1+1+..+1)+…+(1+1+..+1) = constant

2x=1+1+…+1 (Differentiate both side)

Here comes bad, because on the left and on the right are constants

Should be 0 = 0 after diff

In fact, x isn’t a function, only a number. thus diff(x^2)!=2x, x doesn’t depend on anything

Cool!

Also, from 2b=b, you can only conclude 2=1 or b=0

We had a lot of these in school, every one of them involved dividing by zero at some point.

math is not misleading,, it is jus far too simple to be fooled with it can be any simplier ,,just work with the already simplified transposition framework no shortcuts and u wil never go wrong

COOL AND HOT

Chuck Norris can divide by zero.

Chuck Norris can make Pi rational by telling it to calm the fuck down.

Holy Shit skullsinthestars may be the funniest mutha fucker in the whole world. That is literally the best one Ive ever heard!!!!!!!

But seriously, I think my calc prof needs to read these dumb fuckin mistakes cuz he does that stuff all the time. there is truth in the fact that more educated people overlook sinple mitakes….

I like the one that ends with pi=3 this is brilliant!!

Great! This is the kind of stuff that makes math interesting ;).

Take a look at this: ( it’s similar to the one by glubot : 2 = 1 )

x^n = x + x + … + x + x + x ( n times )

Differentiate both sides to get,

n(x^(n-1)) = 1 + 1 + … 1 + 1 + 1 ( n times )

So, we find n(x^n-1) = n !

[[ pi = 3 was superb ! ]]

Pingback: Top Posts « WordPress.com

Fantastic, found this through stumbleupon, I was about to say you should be a teacher 🙂 then I read more of your blog!

Do you mind if I put your blog on my blogroll? I’ve only just started blogging and you share a lot of my interests 🙂

bgecko wrote: “Do you mind if I put your blog on my blogroll? I’ve only just started blogging and you share a lot of my interests”

Certainly I don’t mind! I’m always happy to be on another blogroll. I’m glad you’re finding the site enjoyable!

first goes to glubot:

you mistake is a the differantial,if i remember correctly, you will be a statment will variable and not and equantion. like x/dx of x^2 = 2x

second goes to TORNADO

you have a mistake right at your first statment

x^n = x*x*x*x*x(n times)

or

1^2=1

2^2=2+2

3^2=3+3+3

etc

and

1^3=1

2^3=(2+2)+(2+2)

3^3=(3+3+3)+(3+3+3)+(3+3+3)

etc

don’t know how to write it in math way, but you get the idea

anything wrong with this proof

0=0

sin^-1(0)=sin^-1(0)

180=0

180-90=0-90

90=-90

sin(90)=sin(-90)

1=-1

1+1=0

it is pettry obvious

180=0

@Jeffery Chanbers:

The range of the arcsine (a.k.a. “inverse sine” or “sin^-1”) function is [-pi/2, pi/2] (radian measure), which happens to be -90 degrees to 90 degrees inclusive. As a result, sin^-1(0) cannot be 180 because that is represented as either 180 or -180, which isn’t in the range at all. To summarize, your flaw is in your third step as optiranium pointed out.

Here is a strange one:

x = 1

d/dx[x] = 1

d/dx[x] = x

d/dx[x^2] = x^2

2x = x^2

x^2 – 2x = 0

x^2 – 2x + 1 = 0 + 1

x^2 – 2x + 1 = 1

(x – 1)^2 = 1

x – 1 = +-sqrt(1)

x = 0 or x = 2

1 = 0 or 1 = 2

The flaw is admittedly easy to spot. Still, it was fun. ^_^

Oh, how i love math. ❤

On the last proof, are there not TWO incorrect steps? The second to last step “i = 1/i” followed by squaring both sides to obtain “i^2 = 1” is completely false as well, and is a much more glaring error.

My reasoning is thus: (1/i)^2 = 1/(i^2) = 1/-1 = -1? So the step in red might as well not be in error, because this one is a clear violation of imaginary numbers.

Am i off base here?

Tyler: Ah! There is, in a sense, an ambiguity about the step from i = 1/i to the step i^2 = 1. My intention, and the intention of the proof, is that one multiplies both sides of the expression by i, which results in i^2=1. One can also derive an expression for i^2 by squaring the equation i = 1/i; if you do that, you counteract the error created by taking the wrong square root of -1/1=1/-1.

To clarify the (erroneous!) proof, I should add a step as follows:

i = 1/i

i x i = i / i

i^2 = 1

Does that make sense?

Very interesting. I’d seen some of these before but couldn’t really explain where the faults were.

are you stupid or something?, I just read the first one and it’s completely wrong. If a=b, then a-b=0, so how the hell do you go from 0=0 to a+b=b? and nobody has noticed this simple problem yet?, math by its nature cannot contradict itself only stupid people practicing math can contradict themselves.

okay, second one is wrong too when multiplying pie + 3 and pie – 3 doesn’t give you pie sqaured -9. I didn’t pay in school and even I can tell where the problems are. the only thing I find interesting about these faulty equations is, how can someone with a limited knowledge of simple mathematical rules write these equations and have them posted and nobody tell them they’re fucked in the head.

and tyler hartley, you are not off-base

o shit, my bad I didn’t read the rest of the instructions, I didn’t know they were intentionally wrong. my bad.

Um, yes, step 1 in solving any math problem: READ THE INSTRUCTIONS! 🙂

Wow. Great example here of leaping before you look. This feels like a YouTube comment thread now. You’ll notice though i too thought i’d found a problem, i didn’t tell people they were stupid and insult them; i asked. Take a chill pill, don’t call people you’ve never met “fucked in the head”, and realize that everyone on the internet is not dumber than you.

And have a good day.

Hi, my name is Krzysztof.

but seriously, I think my calc prof needs to read these dumb fuckin mistakes cuz he does that stuff all the time. there is truth in the fact that more educated people overlook sinple mitakes….

John:

The point of the first one is that you have divided by (a-b), which is, of course, a faulty step in the proof. If a did not equal b, this would be a perfectly feasible mathematical operation.

Secondly, pi + 3 times pi – 3 DOES give you pi^2 – 9. It’s called FOIL. Ever heard of it? Most people learn it in Algebra I. If you’re over the age of 15 and have never heard of it, have fun working at McDonald’s.

That’s not a very nice thing to say. Also, try your FOIL with trinomials and above.

I’d love to point out yet another math fallacy, that to me is kinda strange.

log (base i) x = 3; and log (base i) x = 7. Always assume i=square root of negative one.

Solve for x in each case.

Then: i^3 = x and i^7 = x.

Then: x = -i, and x = -i.

Therefore: 3 = 7.

Very nice example! It arises from the fact that the logarithm function is multivalued in the complex plane, and in fact has an infinite number of possible values, just as the function sqrt(x) has two possible values.

It is a strange fallacy, and arises from the fact that the logarithm is a very strange function, especially with complex values!

x^2=x+x+….+x(x times)

after differentiating

2x=1+1+……+1 not x times hence x is variable

Thanks way and suggestions.

i am happy with this solution.

Pingback: Math errors | Alleycats

For the first one, line 4 to 5, it divides by (a-b). a = b so (a-b) = 0 Therefore line 4 to 5 divides by zero. proof is false.

focus every problems here is not wrong is right

because when you start wrong you finish wrong

example

if x=y then 5=6

if a=b then 2=1

Half of a thousand is four hundred twenty five. Prove it. Anyone can help me?

Hello,

you have given assumption a=b, we cant assume that because a will never equal to b..

if this can possible then we can assum 100=200 and will draw different answers.

-1=-1 has some meaning because here is not any assumption…

thank you!

Pune (india).

(a+b)^2=1(say)

a^2+b^2+2 ab=1

a^2+b^2=1-2 ab

-2ab=-2ab+1

0=1

in last before step, a^2+b^2 should be replaced by 1-2ab and not as -2ab

Pingback: Spot the error π = 3 | The k2p blog

kindly spot the error in this….

Evaluate the integral of e^{x}\cdot e^{-x}:

\int e^{x}\cdot e^{-x}dx

Let u = e^{-x} and dv = e^{x}dx.

Then du = -e^{-x}dx and v = e^{x}.

By integration by parts,

\int u dv = uv – \int v du

becomes

\int e^{-x}\cdot e^{x}dx = e^{-x}\cdot e^{x} – \int e^{x}\cdot (-e^{-x})dx

\int e^{-x}\cdot e^{x}dx = e^{0} + \int e^{x}\cdot e^{-x}dx

\cancel{\int e^{-x}\cdot e^{x}dx} = 1 + \cancel{\int e^{x}\cdot e^{-x}dx}

0 = 1

Pingback: Umpic de… matematica | Blog despre religie

Pingback: 100k page view milestone! | Skulls in the Stars

Pingback: Fix Math Errors In Excel Windows XP, Vista, 7, 8 [Solved]

To prove 0=1

x=1 (say)………(1)

Multiply both sides by x,we get

x^2=x

Now subtract 1 on both sides

x^2-1=x-1

x^2-1^2=x-1

(x+1)(x-1)=x-1

x+1=1

x=0

But x=1 from (1)

0=1

Hence proved

:))

the second proof is better than othrs.

In the third proof, there is also an error between the third last and second last steps. (1/i)^2 = (1^2/i^2) = (1/-1) = -1 = the left side of the equation, so this works afterall.

In the first one, you aren’t dividing by (A-B); since it is on each side, the two cancel out; and assuming anything divided by itself equals 1, we may assume 0/0=1, which then removes the error.

For the first one, I believe the one above the highlighted one causes it, as a sneaky division by zero.