I’m in the mood to do something a little more ‘math-y’! A few weeks ago, Tyler at PowerUp did a nice post about the divergence of the harmonic series, and that got me thinking about the weirdness of infinite series. Since I’ve been working on a book chapter on infinite series anyway, as a part of my upcoming ‘math methods’ textbook, I thought I’d talk a little about infinite series and some unusual results associated with them!

So what is an infinite series? Let us first imagine an infinite ordered collection of numbers , where

represents the location in the sequence. Such a collection is called an infinite sequence; an example is the sequence

An infinite series is the sum of the terms of an infinite sequence; for the sequence above, the infinite series is written as

.

The end of this equation is the mathematical shorthand for the summing of an infinite series: we ‘sum’ () from

to infinity (

) terms of the form

.

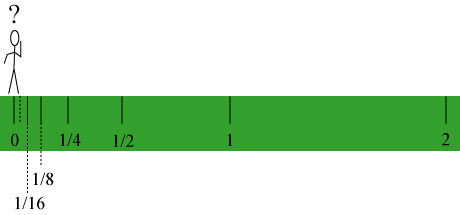

Such infinite series appear in mathematical physics all the time; the series above, with terms , appeared very early in history in one of Zeno’s paradoxes! The so-called ‘dichotomy paradox‘ was summarized by Aristotle as follows:

Zeno’s arguments about motion, which cause so much disquietude to those who try to solve the problems that they present, are four in number. The first asserts the non-existence of motion on the ground that that which is in locomotion must arrive at the half-way stage before it arrives at the goal. This we have discussed above.

In other words, in order for a person to walk 2 miles, they must first walk 1 mile. Before they walk a 1 mile, however, they must walk 1/2 mile. Before they walk a 1/2 mile, they must walk 1/4 mile. Extending this argument ad infinitum, Zeno argued that motion was apparently impossible, because to travel a finite difference involved an infinite number of intermediate events:

The total distance traveled can be added up, working backwards: 1 mile +1/2 mile + 1/4 mile + 1/8 mile + 1/16 mile, etc.!

Zeno considered the dichotomy argument a paradox because it seemed irreconcilable that traveling a finite distance (2 miles) could be broken into an infinite number of ‘events’ (crossing the 1 mile mark, 1/2 mark, 1/4 mark, and so forth).

The mathematics of infinite series, though not necessarily completely resolving the paradox, demonstrates that it is mathematically consistent to consider the path as a succession of progressively smaller ‘half-steps’. In fact, we can exactly determine the numerical value of the sum of a series of the form

.

Series of this form, where is a number whose absolute value is less than one, is known as a geometric series. We can determine the sum of this series using the following clever tricks:

- Define a finite geometric series as

.

- Note that

may be written as

.

- Note that

may also be written as

.

- Equate these two versions of

:

- Solve for

:

- In the limit

, the term

goes to zero provided

, and

becomes the sum

of the infinite geometric series:

For the specific case of Zeno’s paradox, where , we find that the sum of the series is

, which is exactly the total distance traversed in Zeno’s argument.

The next natural question to ask is: what types of infinite series sum to a finite value, i.e. “converge”? In other words, if I am given an infinite series, how do I determine if it sums to a finite value? Clearly a series of the form

sums to an infinite value, i.e. “diverges”, simply because every term in the series is bigger than the previous one! In fact, one can show that the sum of a finite number of terms of the series can be written as:

.

You can check this yourself for the first few terms of the finite series. As we let , we find that the sum must tend to infinity.

It is obvious, then, that for a series to be finite, the terms of the sum must grow smaller in size as we get further along in the summation; that is, the quantity as

. This condition is necessary for the series to converge, but is it sufficient?

Here we come to the example of the harmonic series, which Tyler discussed. The harmonic series is of the form

.

Each new term we add to the series is smaller than the previous one. As ,

, so it would seem natural to assume that this series must converge to a finite value. However, it does not! To see this, we group the terms of the series in the following suggestive way:

.

The first parenthetical grouping is two terms, the next four terms, the next 8 terms, and so on. Looking at the first grouping, we note that . This means that

.

Similarly, , as is

and

. This means that

.

The same argument can be made for each grouping. Therefore, the sum of each group of terms is greater than 1/2; since the series is infinite, there are an infinite number of groups of this form, and therefore the sum of the series is infinite!

For a series to converge, it is not sufficient for the terms of the series simply to go to zero; the terms must go to zero at a very rapid rate. The geometric series described above has terms which go to zero sufficiently rapidly, while the harmonic series does not.

Now let’s get even weirder, and consider a ‘cousin’ of the harmonic series, the alternating harmonic series, defined as

.

This series differs from the harmonic series in that the terms alternate in sign. At first, one might think that this series doesn’t converge, but one can show, theoretically and computationally, that the series, summed as shown, approaches the value , where

represents the natural logarithm.

This series converges because the terms of opposing sign partially cancel each other out. This partial cancellation is enough to make the alternating harmonic series converge.

Here comes the weird part! We know from elementary arithmetic that addition is an order-independent operation; that is, when we add a finite set of numbers together, say ,

and

, we get the same answer regardless of which numbers we add first:

,

and so forth. Rearranging the order of summation does not change the value of the sum.

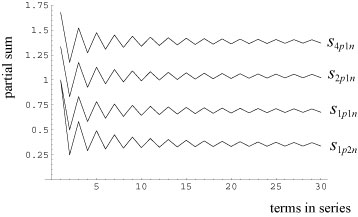

Is this also true if we rearrange the order of an infinite series such as the alternating harmonic series? We consider the following different addition schemes:

: the ‘normal’ addition, we add one positive term, then one negative term:

: we add one positive term, then two negative terms:

: we add two positive terms, then one negative term:

: we add four positive terms, then one negative term:

Though we are performing an infinite rearrangement of the terms of the series, one might expect that the sum must be the same regardless of the order we take them, right?

Wrong! Let us numerically sum the series as described above; the horizontal axis roughly represents the number of terms of the series we have summed so far:

Rather remarkably, the series converges to different values depending on how we sum it! In fact, one can prove rigorously that, by making an infinite rearrangement of the terms of the alternating harmonic series, one can get it to sum to any value you desire, including infinity!

The infinite case is easy to show; simply add together all the positive terms before adding any negative terms (i.e. )! As we add the positive terms together, we have a series of the form,

.

But consider the following: . This means that the sum satisfies the relation,

.

Formally, this series is half the sum of the harmonic series, which we know to be infinite. ‘Half of infinity’ is still infinite, so summing all positive terms first results in an infinite sum!

To try and understand the peculiar behavior of the alternating harmonic series, we try rearranging the order of another series, the ‘alternating quadratic” series:

.

It is still an alternating series, but the terms go to zero ‘faster’ than the alternating harmonic series. The corresponding computation results in a plot of the form:

No matter how we sum the series, it always eventually approaches a limit approximately around 0.8!

Evidently there are two distinct ways in which a series can converge to a finite sum. Some series, like the alternating quadratic series, have terms which go rapidly to zero. In essence, the sum of the series is almost entirely determined by the low- n terms of the series, and the higher terms contribute nothing. Other series, like the alternating harmonic series, only converge because adjacent members of the series partially cancel each other out. In essence, every term of the series contributes significantly to the total sum. When we rearrange the series, for instance by using more positive terms than negative terms, we are ‘favoring’ the positive terms: because we are summing infinitely, the negative terms never get a chance to ‘catch up’!

A series which sums like the alternating harmonic series is called conditionally convergent: it only sums on the ‘condition’ that the alternating terms cancel, and if we took the sum of the absolute values of

, i.e.

, we would find the series diverges (it would in fact just be the harmonic series). A series which sums like the alternating quadratic series is called absolutely convergent, and it converges even if we sum the absolute value of each of the terms, i.e.

, though it doesn’t necessarily converge to the same value. In fact, the definition of an absolutely convergent series is that the sum of the absolute value of the terms is finite, i.e.

.

Most problems in mathematical physics involve absolutely convergent series. Such series are convenient because they behave like ‘real’ numbers: we can add them together in any order we want, and the product of two absolutely convergent series is simply the product of the sums of the individual series.

It would be nice to say that conditionally convergent series never make an appearance in mathematical physics, but it isn’t true: such series appear in a number of physically relevant mathematical problems. It is just another example of the reality that we always must be a little careful when working with infinity in mathematics.

And it shows that infinite series are kinda weird!

Very interesting! I was completely unaware that reordering the terms in a conditionally convergent series might give you a different sum.

Does this only happen if you group different numbers of positive and negative terms? For example, if you group two positive then two negative terms (ad infinitum), would the sum remain the same?

Also, could you give an example of a one of the physically relevant problems you mentioned that uses a conditionally convergent series?

Wade: Good questions! I think, though I’d have to check, that 2p + 2n would keep the sum the same. A change in the sum comes when one sums positive and negative terms at a different rate.

As for the physically, or at least mathematically, relevant appearances of a conditionally convergent series, there are two:

The Taylor series expansion of , as

, as  , becomes the alternating harmonic series, with sum

, becomes the alternating harmonic series, with sum  . Also, the Fourier series of a square wave is roughly proportional to the alternating harmonic series at certain points, making it conditionally convergent.

. Also, the Fourier series of a square wave is roughly proportional to the alternating harmonic series at certain points, making it conditionally convergent.

Both of these examples, however, are associated with some other sort of ‘singular’ behavior. In the case of the Taylor series expansion, is on the edge of the domain of convergence. In the case of the Fourier series, the square wave function is discontinuous. These are both useful results, however, and could in principle be used in theoretical calculations. One must take care, however, that they are not used in such a way as to wreck the ‘proper’ behavior of the series.

is on the edge of the domain of convergence. In the case of the Fourier series, the square wave function is discontinuous. These are both useful results, however, and could in principle be used in theoretical calculations. One must take care, however, that they are not used in such a way as to wreck the ‘proper’ behavior of the series.

You probably know this, but it’s a basic theorem in analysis (due to Riemann) that *any* conditionally convergent series (not just the alternating harmonic series) can be rearranged to sum up to *any* number you want (actually, a stronger statement is true, but it’s too technical to write up here).

At first this seems remarkable. But not so remarkable if you think about it for a minute… The two key ideas in the proof are: (1) failure to converge absolutely means you can find a rearrangement to make the partial sums as large (or as small) as desired, and (2) conditional convergence means the tail of the original series is getting smaller and smaller. Thus you add up “just enough” terms to barely overshoot your desired sum, the add “just enough” oppositely-signed terms further out in the series to just barely undershoot, etc… Continuing inductively, you can make the sum as close as desired to any preassigned value. See Theorem 3.54 on p.76 of Rudin’s “Principles of Mathematical Analysis, 3rd. Ed.,” by McGraw Hill. Weird stuff!

Also, regarding arithmetic of infinite series, it turns out you can add, subtract, and scalar-multiply *any* convergent series (even conditionally convergent ones) and get the right answer, provided you’re not rearranging. For absolutely convergent series, you can rearrange all you want, and you always get the same sum.

Finally, regarding multiplication of series, Mertens showed you can drop the hypothesis that both series need to be absolutely convergent; in fact only one of the two series needs to converge absolutely — the other can converge conditionally, and the conclusion is still valid. This might be useful in certain cases. This is Theorem 3.50 in Rudin’s book, referenced above.

Mike

Mike: Thanks for the comments! I (very recently) learned about most of these when I was researching for my book chapter on infinite series, but it’s nice to have them spelled out for the blog. I especially like the prescription of how to actually make the series converge to a given number.

You should have your own math blog, if you don’t yet!

Pingback: Props to Skulls in the Stars « Mike Fairchild

I still have trouble believing that alternating series’ sums can be changed by altering the rate of positive and negative numbers being added together. Although you add positive numbers together more frequently, you still have the negatives that must be added up at infinity. Just because there’s no limit on the terms, doesn’t mean that the terms can be canceled out.

It seems like the paradox (can’t think of who stated it, it’s not at the top of my head) that this mathematician used to prove the existence of god from creating something from nothing. It used 1-1 = 0, and filled in the places for an = 0, changed the parenthesis, and ended up with 1. The critical error in this was the fact that he forgot to subtract the zero from the very end, at infinity.

ex: 0 + 0 + 0 + .. = 0 = (1-1)+(1-1)+(1-1)+…= 0 = 1 – (1+1)- (1+1) -… = 0 therefore 0 = 1.

(really, there is a lone “-1” outside of the parentheses at the very end leading to 0 = 0 as it should be.)

We lack the capabilities to calculate any value at infinity. Many methods are good approximations, but still, I cant trust that rearranging values in a conditionally converging series can altered to equal anything one desires; there HAS to be a flaw in the proof. Maybe the negatives, or the positives get lost in infinity, but I could guarantee that as long as the arithmetic is correct, they _will_ catch up in the end, but outside of our current ability to calculate.

joe: The problem with your reasoning is assuming that there is a ‘very end’ to the summation: there isn’t any end to the summation, as it is, well, infinite!

Infinity causes all sorts of problems as a concept. One could say that this problem of conditional convergence is similar to the problem of dividing infinity in two: no matter how many times you ‘slice up’ an infinite number, you’re still left with infinity. This idea is completely at odds with normal rules of multiplication/division.

Keep in mind that all the regular rules of arithmetic were developed for finite summations, long before anyone introduced the concept of the infinite. It is not an idea which we are exposed to in our daily existence, to be sure. With that in mind, there isn’t necessarily a reason to expect that infinite sums should always behave like finite sums, and in fact they don’t.

I do know the basis of the theorem, and I do understand how it works. It’s not the easiest thing to comprehend, but it is definitely believable. But, this theorem is still built on the loose foundation of our understanding of infinity.

I’m well aware of the fact that infinity causes many issues with normal mathematical rules. But I’m sure we can agree if infinity were divided, the speed in which it reaches the theoretical end (non-existent, but still where the numbers eventually go) would in effect slow down, and if it were multiplied, that speed would increase. (On the x-axis, for x > 0: divide 1 by x and have x approach 0, now divide 2 by x and do the same; the second case is increasing at a higher rate to infinity.)

But in essence, we’re not really talking about infinity itself, more of the summation of an infinite amount of terms converging should they meet a certain set of requirements. Simply rearranging terms cannot have any effect on the overall sum, no matter what we do. If we add the positive and negative terms at varying rates, we do shift values. The negative terms may not be able to “catch up” in the end mathematically, but they do still exist. Effectively speeding up the rate to infinity for certain numbers is almost like multiplying every n-th term and not the others. Right there, it breaks the idea that one is simply rearranging the terms. It could almost be said that you’re increasing the number of positive/negative terms in the infinite series. Although infinity is infinity, if you add 1 to one infinite set, and not the second one, the first set would be larger by 1.

In the case given by 1/x and 2/x above, the two equations never overlap. Assuming that they do at x = 0 would assume that 1/x rapidly increases faster than 2/x which would be impossible. This proves infinity is not a single term and can in fact be larger or smaller. This would mean that from x = 0 to x = n, the length of the curve of 2/x is longer than that of 1/x, translated to an infinite amount of infinitesimals, the amount of terms in 2/x is larger than 1/x. Given, neither of these converge absolutely, but that is part of the proof of the theorem.

At least that’s my understanding; it explains how different sums are reached and how it is beyond simply rearrangements. If there are any flaws in my logic though, please let me know.

Hi Joe. Intuitive reasoning can be pretty convincing when it comes to finite things, but the main problem with your arguments is that your inuitive reasoning leads you astray when it comes to the infinite. That’s why we need rigorous and precisely stated axioms, definitions, and theorems.

Rather than address each point separately, I think you’ll get a larger payoff if you see some concrete examples of where intuitive reasoning about infinite things fails, and if you can come to understand the need for rigorous definitions, axioms, and theorems. Therefore, I will elaborate a little bit on some “infinity” concepts in this post, so that you can see the type of thing I’m talking about. Limits, infinite series, and counting infinite sets all involve the concept of infinity. Most of your arguments involving these three concepts are in error precisely because intuitive reasoning about infinity often doesn’t work. Don’t feel bad. This is not natural to *anybody,* and it took mathematicians thousands of years to finally develop a rigorous understanding of these ideas.

Let me illustrate with a compelling example that shows where intuitive reasoning can go astray and how a rigorous approach can rescue us. In particular, I will prove in a moment the completely counterintuitive result that the set of all even integers has the same cardinality (i.e. size) as the set of all integers!

After centuries of failed attempts to define the size (i.e. cardinality) of an arbitrary set in any consistent and intuitive way, mathematicians finally abandoned the idea, focusing their attention instead on the *relationship* between the sizes of two sets.

Some rigorous definitions: Two sets A and B have the same cardinality, denoting this by card(A)=card(B), if they can be put in a one-to-one correspondence with each other (i.e., we can find a one-to-one mapping from A into B, and a one-to-one mapping from B into A. By a one-to-one mapping, we mean that no two distinct elements can be mapped to the same thing). We say card(A)<=card(B) if there is a one-to-one map from A into B. We say card(A)<card(B) if card(A)<=card(B) and it is not the case that card(B)<=card(A) (i.e. there is a one-to-one map from A into B, but not vice versa). By the notation card(A)>=card(B) we mean there is a one-to-one map from B into A, and card(A)>card(B) means there is a one-to-one map from B into A but not vice versa.

Example: Let A={a,b,c} and B={1,2,3}. It seems clear A and B have the same cardinality, but let’s prove it. Define the mapping f:A->B by f(a)=1, f(b)=2, and f(c)=3. Then f is a one-to-one mapping of A into B since no two elements in B are the image of more than one element in A; hence card(A)<=card(B). Similarly, we may define the one-to-one map g:B->A by g(1)=a, g(2)=b, and g(3)=c. Thus card(B)<=card(A). Consequently, according to our definition, card(A)=card(B).

Example: Let A={a,b,c} and B={1,2,3,4}. It should be clear that card(A)<card(B). Proof: f:A->B defined by f(a)=1, f(b)=3, f(c)=4 is a one-to-one mapping from A into B, so card(A)<=B. But no matter how hard I try, I cannot find a one-to-one mapping from B into A. Something in A will get “hit” by two things in B no matter how hard I try! This is obvious but sounds a little hand wavy. Lookup the “Pigeonhole Principle” on Wikipedia. Or, if you’re really bored, you may try all 3^4=81 possible mappings from B into A, and you’ll see that none of them are one-to-one! Thus it is not the case that card(B)<=A. Combining this with the fact that card(A)<=card(B), we get card(A)<card(B).

By now you should get the idea. I think you’ll agree this matches up perfectly with our intuitive notion for the cardinality of sets. The advantage of our rigorous definition of the relationship between cardinality of sets is that we can readily apply it to infinite sets.

Thus let us now come to the infinite. It seems “intuitively obvious” that the set of all even integers has “half as many” elements as the set of all integers. But this is wrong! In fact, they have the same cardinality!

Proof: Let E denote the even integers, i.e. E={…,-4,-2,0,2,4,…} and let Z denote all the integers, i.e. Z={…,-2,-1,0,1,2,…}. Define the mapping f:Z->E by f(n)=2n. Then f is a one-to-one mapping from Z into E, because if 2m=f(m)=f(n)=2n we must have m=n, and thus no two integers in Z get mapped to the same even integer in E. Hence card(Z)<=card(E). Now we will define a one-to-one map g from E to Z. Note that every even integer in the set E may be written as 2m for some integer m in Z (this is what it means for an integer to be even!) Thus define g:E->Z by g(2m)=m. This map is one-to-one because if m=g(2m)=g(2n)=n then clearly m=n. Hence card(E)<=card(Z). Consequently card(E)=card(Z), i.e. E and Z have the same cardinality!

At first this seems at odds with our intuition. But it’s not so counterintuitive if you think this way: Every single element of E corresponds to exactly one element in Z and vice versa! Thinking this way, it now seems obvious that E and Z have the same cardinality, since there is such a one-to-one correspondence between the sets.

You thus made a mistake in your intuitive statement “Although infinity is infinity, if you add 1 to one infinite set, and not the second one, the first set would be larger by 1.” NO! This is exactly the kind of intuitive reasoning that can lead you astray. The proof above showing that the cardinality of the even integers is the same as that of all integers is a more striking example that this is wrong. If you want another popular example, lookup the fascinating and compelling “Hilbert’s Hotel” on Wikipedia.

This is the kind of flawed intuitive reasoning you use repeatedly when arguing about limits, rearrangements of infinite series, and counting. I hope now you can see the need for rigorous definitions and theorems.

You did, however, have a kernel of truth in your post. You said: “infinity is not a single term and can in fact be larger or smaller.” True! Thus the admonishment math professors are always dishing out to their students: “Infinity is not a real number!!”

(NOTE: The symbols and

and  are “extended real numbers,” in the sense that we often treat it like a real number, provided we extend the order relation on the real numbers in a certain way, and provided we don’t do things with the infinity symbol that will get us in trouble. Again, see Rudin, this time pages 11-12).

are “extended real numbers,” in the sense that we often treat it like a real number, provided we extend the order relation on the real numbers in a certain way, and provided we don’t do things with the infinity symbol that will get us in trouble. Again, see Rudin, this time pages 11-12).

Your idea that there are different sizes of infinity is absolutely correct. A set A is countably infinite if card(A)=card(Z) (remember what this means? It means you can find a one-to-one map from A into Z and vice versa). A set that is countably infinite is said to have cardinality aleph-nought, i.e. . Any two sets that are countably infinite have the same cardinality. However, there are “larger” infinities. For example, if R stands for the set of real numbers, it can be shown that card(R)>=card(Z). That is, there is a one-to-one map from Z into R, but there is NO one-to-one map from R into Z. Thus card(R) is a “larger” infinity than card(Z). We denote card(R) by

. Any two sets that are countably infinite have the same cardinality. However, there are “larger” infinities. For example, if R stands for the set of real numbers, it can be shown that card(R)>=card(Z). That is, there is a one-to-one map from Z into R, but there is NO one-to-one map from R into Z. Thus card(R) is a “larger” infinity than card(Z). We denote card(R) by  . The continuum hypothesis asserts there does not exist a set A with card(Z)<card(A)<card(R). That is, there are no “infinities” between the cardinality of the integers and the cardinality of the reals. Answering the continuum hypothesis is probably the most famous unsolved problem of set theory today.

. The continuum hypothesis asserts there does not exist a set A with card(Z)<card(A)<card(R). That is, there are no “infinities” between the cardinality of the integers and the cardinality of the reals. Answering the continuum hypothesis is probably the most famous unsolved problem of set theory today.

Suggestion: If you’re interested in understanding set theory, I highly recommend the classic book “Naive Set Theory” by Paul Halmos. You can find it on Amazon.com pretty cheap.

Back to the original post about rearrangements of infinite series, I first suggest that you read the proof. It’s on page 77 of Walter Rudin’s classic “Principles of Mathematical Analysis, 3rd. Ed.” You can find it at any university library. If you don’t understand the proof at first, you need only read the preceding 76 pages of introductory material! (If you can’t get Rudin’s book, you can almost surely find it in any “advanced calculus” book or introductory book on mathematical analysis. Check your local library.)

One final remark: After you’ve worked with this stuff for a while, you will develop a pretty solid intuition about infinity. People aren’t born with this intuition, but rather it ripens over time, like wine. And what a sweet wine it is…

Mike:

Thank you for such a quick reply. It did explain a lot and certainly cleared up a lot. I’ve read the proof a few times actually, it even detailed the basics in my calculus textbook a while back, which is what brought me to this topic in the first place actually. I definitely now understand the logic in how the theorem works.

After reading up on the “Grand Hotel,” the “Pidgeonhole Principle,” and also on the continuum hypothesis, I must say, Riemann’s series theorem can in fact be proven by the set of current axioms. However, that relies on the fact that our axioms are true.

A lot of this is really fascinating and has definitely inspired me to study set theory in depth, even if it is on my own time. One thing still intrigues me though; if, by rearranging the alternating harmonic series to converge to 1/2 of the usual sum, how does the cardinality of the negatives equal the cardinality of the positives? an = { + – – + – – + – – … } (only signs were shown for clarity) It’s obviously not the case. For every measured 3 consecutive terms, there is one positive and two negative. Therefore, card(a+) < card(a-); it could be said that card(a-) = 2*card(a+). In the original set, card(a+) = card(a-).

Let A = abs(an)

If we measure the cardinality of first set, denoted an, as card(an), and the second set, denoted as bn, as card(bn), if card(an) = card(a+)+card(a-) = 2*card(A). If card(bn) = 3*card(A), how can card(an) = card(bn)?

I’m reluctant to believe that. My hypothesis I just presented can account for the change in sums. This would prove, given I had a formal proof of the statement, that rearranging the sums of a conditionally convergent series is more than just rearranging the terms, it’s relying on infinity to create the terms that were not there in the first place, (which would be the case if the series was finite, but seeing as the pattern holds true for large values of n, there is reason to believe it would do so as it approaches infinity, and no reason to believe otherwise). This would mean that more terms were added and that the sum is a result of the addition of a new set.

This would require proof that there is a set A for which card(Z)<card(A)<card(R) (using the same names for the same sets in your reply). But is it not possible that the cardinality could be altered by changing the value of the step size of the “continuum” of real numbers? This would imply that real numbers are not be continuous by our current definition. However, if there is an infinitesimal value as a step size between each real number, and that the value can be larger or even smaller (infinity can be “larger” or “smaller”, so would the notion that an infinitesimal could act the same way?). By changing the step size you could effectively prove that there are more terms in set A if the infinitesimal is smaller.

CH states that 2^(aleph-alpha) = (aleph-alpha + 1).

Would it not make more sense to define (aleph-(alpha+1)) to be equal to (aleph-alpha)*(ratio of the larger step size to the smaller)? The cardinality of a finite sets follows this with an error decreasing as the cardinality of the sets become large.

The step size, (delta)n = 1 for integers, and the step size (delta)x is infinitesimal. It makes sense that you need an infinitely large amount of terms for a real number to equal the next integer, while you only need one more term for Z to be the next integer. if card(z) = 2 (z = [0 1] for example), and if r’s step size is 1/2, you need two steps for r = z. Doing so, card(r) is now 3. As r’s step size, dx, decreases, card(r) increases for each step in z. card(r) = 1/dx*(card(z)-1) for finite terms. If the terms of r and terms of z are then brought to represent real numbers and integers respectively, and dx is brought to an infinitesimal, does it not make sense that card(R) = (1/dx)*(card(Z)-1)?

If so, card(Z)-1 can equal aleph-null as adding a finite number (-1) would not affect the cardinality of the set. Also, card(R) = aleph-one by definition.

Therefore:

aleph-one equals the product of the ratios of step sizes of integers to reals and aleph-null.

Now, if we take the step size of A to be less than that of the reals, dX <dx, we can say that card(A) = (1/dX)*(aleph-null).

Because dX < dx, card(A) card(Z).

There also exists no one-to-one ratio between sets, so:

card(Z)<card(A)<card(R) if and only if, the infinitesimal is a fluid value.

If the previous statement holds true, thus, the cardinality of the alternating harmonic set can change, leading to the notion that terms were added or removed and that it is beyond a simple rearrangement.

It looks like I got a little bit carried away there. I apologize for the wall of text, and I hope I haven’t butchered the statements you’ve given with false logic, although I will admit it’s a possibility given my limited experience on the subject.

I have to thank you though. You’ve introduced me to a topic and really furthered my understanding on the theorem. I just might even pursue mathematics a bit further than I had planned.

Hi Joe,

I’m afraid I won’t have time for a detailed response after this one, since I’m getting busy for final exams and so forth.

First, a minor comment. Regarding the proof of Weierstrass’ rearrangement theorem, you say it “relies on the fact that our axioms are true.” Of course, but this is not a liability at all. Just like you can’t define every concept because of our finite vocabulary, you can’t prove everything. To get started, we need to bootstrap ourselves with some things we accept to be true without proof. For aesthetic reasons, we try to limit ourselves to as few and simple axioms as possible. You probably knew this already, I just wanted to emphasize that accepting axioms as true is not a bad thing. It’s a practical matter, because without them we couldn’t get any rigorous mathematics done! My own philosophical take on accepting our current axioms is that it’s okay because:

(1) the axioms of modern set theory are what mathematics is built upon,

(2) physics relies heavily on the results of mathematics, which ultimately goes back to set theory, and

(3) since our physical theory agrees with reality in most cases, we’ve probably got a good set of axioms.

(My reasoning in step 3 is really based on the contrapositive of that statement, namely that if we had wacky axioms, we would have wacky theorems, and the physics would disagree with the mathematical predictions. If you accept this, then you must accept statement 3, since any statement is logically equivalent to its contrapositive.)

Also, in my previous post, I said we don’t define card(A) for an arbitrary set A. That was a convenient untruth, mainly for economy of writing, because I knew the post was getting long, and I didn’t want to go into all of it. So let me clear this up once and for all: Let A be an arbitary set. We define card(A) as the equivalence class of A under the equivalence relation of equipollence (where we say two sets A and B are equipollent if they have the same cardinality, i.e. card(A)=card(B), as defined in the previous post). At first this might sound circular, but it’s not if you think about it. Note that the cardinality of a set is *not a number* in any way. It is an equivalence class, i.e. a set. By convention, we say A is a “finite set” if we can find a bijection between A and a subset of the form {1,2,…,n} of the natural numbers, in which case we declare card(A)=n. An “infinite set” is a set which is not finite. Also, there were two typos: In the end, I meant to write card(R)>card(Z), not card(R)>=card(Z), but you probably figured that out. Finally, should have been

should have been  . Anyway…

. Anyway…

You made the same mistakes again, arguing intuitively and not rigorously! Let me show you at least two places where you did that, and then I will wrap up:

About the rearrangement of infinite series, you said “For every 3 consecutive terms, there is one positive and two negative. Therefore, card(a+)<card(a-);” But I already proved that exact statement false when I showed card(E)=card(Z)!!! Think about it: For every even integer there are two integers (the even one and its odd neighbor). But nevertheless, card(E)=card(Z). It is NOT the case that card(E)<card(Z); but this is what you’re asserting above, only you’ve changed the labeling around, letting +’s denote even integers and -‘s denote all the integers. Think about it!

You do it again when you say: “card(an)=card(a+)+card(a-)=2*card(A). If card(bn)=3*card(A), how can card(an)=card(bn)? I’m reluctant to believe that.” First of all, unless you’ve done some outside reading, we haven’t defined cardinal arithmetic yet! You’re adding cardinalities and multiplying them by scalars. Where did we define that yet? Cardinal arithmetic does *not* obey the usual rules of arithmetic, just like certain aspects of infinite series don’t either. This is what gg was saying to you earlier: you can do familiar arithmetic with impunity on finite things, but when it comes to the infinite, you must be very careful and rigorous. Rest assured, cardinal arithmetic is defined, but it doesn’t obey the “usual” rules of arithmetic.

The same goes for the rest of your argument below, but I don’t have time to respond to all of it.

I say this once again: You have start arguing formally, not intuitively. I’m not saying intuition is a bad thing. On the contrary, we desperately need our intuition, built on experience, to guide us when we’re searching for proofs. I just mean that intuitive reasoning cannot be used in the end, when you finally write down a rigorous proof. You’ve made several bold statements, such as “this would prove,” or “I could guarantee,” but these statements are not at all convincing, because you haven’t actually presented a proof! In other words, mathematicians are skeptics: we don’t believe claims of statements without seeing the proof (notwithstanding axioms). It’s better to honestly say “I think” or “my gut feeling is” rather than to say “I can prove,” unless you actually proceed to give a proof! 🙂

If you’re looking for a good book on an introduction to higher mathematics and proofs, I suggest Wolf’s book “Proof, Logic, and Conjecture: The Mathematician’s Toolbox.” Good luck with your journey into set theory and higher mathematics. It is a difficult one, but it is also fun and ultimately rewarding.

Very nice writeup, and a very nice set of comments by Mike.

I think this might be an interesting aside: http://en.wikipedia.org/wiki/Gabriel%27s_Horn

Thanks for the link! I had heard about such objects before, but had never read a detailed description of them.

Nice information for the Riemann Series Theorem. One thing I noticed though is that the idea that it’s counterintuitive just because it’s about infinity, and that infinity is just weird, maybe doesn’t capture the whole point.

The interesting thing is that the theorem says something useful about finite data, as shown above in your graphs of partial sums.

This stuff has applications in fields like computer science where the data are actually finite.

In the finite case, the result seems less paradoxical, because when you terminate the series, the numbers you have added together are in fact different depending on the rearrangement of terms, so there is no violation of the commutative property of addition.

Perhaps in this case at least, we can see infinity as merely a tool that enables us to generalize about finite realities.

how many possible twitter accounts? = (37^16-1) /36.??

how many possible 6 letter-digit char number plates?

= (36^7-1)/35 ?? insert +*-/ 2345 67 8910

= [2240] e6 tons Aluminium.

oeis integer sequences. sum( mu(n)/n) changes sign. at n =…………….. 51 ooo ??

the dominion wtd sell 9-11-1991.

?? wellington new zealand partial harmonic series with mobius function coefficients.

twitter.com/mcDOnewt hang on i’ll find A00 number.

http://oeis.org/search?q=donald+changes+sign&sort=&language=english

2002. low water mark. Donald S. McDonald

Pingback: Mirroring Endless Space