(Update: I fixed the discussion on LIDAR speed detection, thanks to edweird’s observations in the comments.)

This is pretty neat. Via Crooks & Liars, we have Radiohead’s new music video (embedded below the fold), for House of Cards, which uses no cameras at all. From the YouTube description,

In Radiohead’s new video for “House of Cards”, no cameras or lights were used. Instead, 3D plotting technologies collected information about the shapes and relative distances of objects. The video was created entirely with visualizations of that data.

There’s more information on the making of the video at Radiohead’s Google page, where we learn,

No cameras or lights were used. Instead two technologies were used to capture 3D images: Geometric Informatics and Velodyne LIDAR. Geometric Informatics scanning systems produce structured light to capture 3D images at close proximity, while a Velodyne Lidar system that uses multiple lasers is used to capture large environments such as landscapes. In this video, 64 lasers rotating and shooting in a 360 degree radius 900 times per minute produced all the exterior scenes.

For those not familiar with the acronym, LIDAR refers to LIght Detection And Ranging, and is the optical equivalent of RADAR, which uses radio waves. A basic LIDAR system fires pulses of light at a target and measures the time required for the reflected signal to return; the time delay is then a direct measure of the distance to the target. LIDAR can also be used (and is used by the police) to determine the speed of objects: by making a pair of distance measurements with the knowledge of the time between measurements, one can calculate the speed of the vehicle. This speed-measuring technique is different from ordinary RADAR, which aims a quasi-monochromatic signal at a target and measures the Doppler shift of the frequency to determine relative speed. It’s probably worth mentioning that rangefinding and Doppler speed detection are somewhat mutually exclusive measurements: to do rangefinding, one needs a short wave pulse, while for Doppler speed detection, one needs a long, single frequency wave. A single signal cannot typically do both techniques simultaneously.

One advantage of using LIDAR as opposed to RADAR is the much shorter wavelength of visible light. Generally, one can only resolve objects over a length scale equal to the wavelength of the imaging wavefield. RADAR systems use a signal of wavelength from millimeters to tens of meters, while visible light has a wavelength on the order of microns (0.000001 meters). For long-range applications, LIDAR is limited by the fact that visible light is subject to significant distortion by the atmosphere.

In any case, I was unaware of how common LIDAR has become as a commercial application. The Velodyne website lists the following applications:

Autonomous vehicle navigation (commercial and military)

Automotive safety systems, adaptive cruise control, lane following

3-D mapping

Surveying – mobile, as-built

Robotics

Research

Autonomous agricultural vehicles

Movie set rendering

Mining vehicles, profile monitoring

Tunnel surveys

Security – building perimeter monitoring

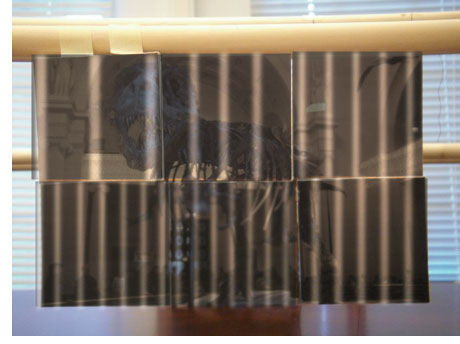

Structured light is a technique which is also quite interesting. As we’ve talked about before on this blog, in particular in the context of making anamorphic images, in general one cannot get depth information about an object using measurements from a single point of view. I demonstrated this with my anamorphic T-Rex. Seen from a single, special point of view, the image looks almost normal:

The six individual photographs, however, are at very different distances in space. From another angle, the picture looks like this:

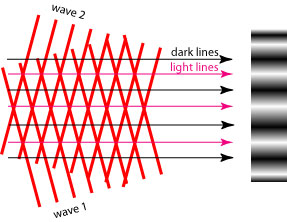

Is there any way to get the depth information from measurements only at the single, special viewpoint? Yes, if we add structure to the light field used to illuminate the scene. Conceptually, the simplest way to do this is to use as a light source a pair of interfering monochromatic plane waves, as illustrated below (adapted from Wikipedia):

The interference of the two plane waves produces a light field which contains a collection of parallel light and dark lines. The image on the right is an illustration of what this pattern would look like when projected on a flat surface.

How does this pattern help us? When we project it onto a complex object, the lines will appear to be spaced further apart or closer together, depending on whether the surface in question is closer or further from our perspective, respectively. By analyzing the spacing and curvature of the lines, one can determine a depth profile of an object, at least from that one perspective.

As an example, here’s a crude simulation of what my T-Rex image would look like if illuminated with structured light with a spacing of 1 inch between bright lines:

From the spacing and the thickness of the bright lines, we can easily determine the relative depth of the different parts of the image. Clearly, the upper-left image is the closest to the source, while the bottom center image is the farthest. This is a simple example; real structured imaging is used to measure the structure of complicated objects, in which case the pattern of lines is curved. An example (from Wikipedia) is below:

Structured imaging also has a lot of applications. From Wikipedia, we have the following list:

- Precision shape measurement for production control (e.g. turbine blades)

- Reverse engineering (obtaining precision CAD data from existing objects)

- Volume measurement (e.g. combustion chamber volume in motors)

- Classification of grinding materials and tools

- Precision structure measurement of grinded surfaces

- Radius determination of cutting tool blades

- Precision measurement of planarity

- Documenting objects of cultural heritage

- Skin surface measurement for cosmetics and medicine

- Body shape measurement

- Forensic inspections

- Road pavement structure and roughness

- Wrinkle measurement on cloth and leather

- Measurement of topography of solar cells

Funny how so much interesting optics can go into a music video! Speaking of which, here’s the video:

A great demonstration and very nicely explained. It’s great to see that someone saw so much artistic potential in a scientific tool.

The LIDAR systems in use by law enforcement for measuring the speed of cars, made by LTI, Kustom, Stalker, Laser Atlanta, and others use the method you described where the “time of flight” of the pulse and it’s return are measured.

I do not think any of the LIDAR systems employed by law enforcement for the purpose above use the “doppler shift” of the light field to measure the targets speed.

Some systems using the “time of flight” method DO provide audio feedback to the operator using sounds that mimic the Doppler audio feedback that radar based speed enforcement equipment has provided for years, as the operators of police LIDAR are most often familiar with that, based on the use of radar devices for speed enforcement.

edweird: “I do not think any of the LIDAR systems employed by law enforcement for the purpose above use the “doppler shift” of the light field to measure the targets speed.”

So it seems to be! Thanks for the comment. I’m more familiar with RADAR application than LIDAR applications, and didn’t think to check the details. I’ve updated the post to fix the inaccuracy.

For those who might be interested, a LIDAR system will make several range measurements to the target vehicle. With the knowledge of the duration between measurements, one can calculated speed in the usual distance/time technique.

Of all places, Radio Shack has a nice pamphlet explaining the different methods of speed detection!

I grant that this is an exceptionally innovative way to film a music video, but Radiohead’s statement that “no cameras or lights were used” is just so totally wrong. While it’s correct that LIDAR doesn’t use a camera, but it uses “64 lasers”. What do they think is coming out of the lasers—some sort of magic pixie dust? Yeah, it’s infrared light, but it’s still light.

Claiming that the “structured light” method is cameraless and lightless is even more baffling. It has freakin’ “light” in the freakin’ title! Furthermore a camera is used to capture the live images of the illuminated object. The product they used is actually titled “The GeoVideo Real-Time Motion Capture Camera”. Camera… see… right there at the end of the title.

Gaah!!!

Next time I run into Radiohead, I am totally going to smack them with my Ph.D.

PD wrote: “I grant that this is an exceptionally innovative way to film a music video, but Radiohead’s statement that “no cameras or lights were used” is just so totally wrong.”

I see your point. I glossed over their actual statement because I saw what they were getting at. What they should have said is something along the lines of:

“Nothing you are seeing is the result of conventional camera and lighting techniques. Instead, sensor information was recorded (in the case of structured light imaging, with a camera) and the data converted into three-dimensional information about the detected objects. This information was then reconstructed via computer to produce the virtual images used in the video.”

I think this is usually referred to as ‘indirect imaging’. I’m guessing that their techs were struggling to find a concise way to explain what exactly was being done…

Reading my previous entry, I sound a lot more upset than I actually was. I really liked the video and how it was shot. However Radiohead’s oversimplification of the technique obscures that this is still an optical technique (i.e. it uses light), and since we both work in that field, it really ruffled my feathers.

Pingback: The Decisive Moment is Dead. Long Live the Constant Moment