![]() A few weeks ago, a new optical imaging system grabbed headlines throughout the world. This system, labeled a “picosecond camera”, can seemingly record images so fast that it can actually track the motion of light itself! Consider the following video taken by the camera (source):

A few weeks ago, a new optical imaging system grabbed headlines throughout the world. This system, labeled a “picosecond camera”, can seemingly record images so fast that it can actually track the motion of light itself! Consider the following video taken by the camera (source):

If you were to be shown this video without any context, you would justifiably think that it represents someone slowly sweeping a “searchlight” of sorts over the cylinder and tomato. What you are really seeing, however, is the evolution of a spherical wave of light as it spreads from the lower right corner of the image towards the upper left. Of course, you are seeing the light reflected from the various objects as it spreads, but the camera is so fast that it is able to make a movie of a light pulse as it travels.

There are caveats, of course: the finished image that you are seeing is not simulated, but is nevertheless the result of computational techniques and many, many, repeated experiments. This isn’t really a “bug”, though, but a “feature”: the researchers at the MIT Media Lab’s Camera Cultures group have devised a clever method to show us something truly unique — and these visualizations may have practical applications.

To understand how the “picosecond camera” works, we first need to talk a bit about the speed of light, and the limitations of conventional cameras. As has been known for centuries, the speed of light (denoted ) is really, really fast:

meters/second, or 300 million meters per second! To put this another way, in a billionth of a second (a nanosecond), light travels 30 centimeters, or roughly one foot.

Now suppose we wish to use a camera to record the motion of light as it interacts with an ordinary object, like a tomato. Estimating a tomato to be 6 centimeters in diameter, we would presumably need to have light travel no more than 3 millimeters during a single frame of our movie, which would give us 20 frames of images during the interaction. This would require a camera with a shutter speed of seconds, or a hundredth of a billionth of a second. If we want a good quality movie of light moving, however, we would need light to travel no more than 0.6 millimeters during a single frame, which would give us a 100 frames of images during the interaction; this requires a camera with a shutter speed of

seconds, or two picoseconds!

Ordinary cameras fall far short of these capabilities. The fastest DSLR cameras have top shutter speeds of around sixty microseconds, or 60 millionths of a second. Light travels 60,000 feet — or 11 miles — during the time that the shutter is open, clearly inadequate for visualizing the motion of light.

More specialized cameras with non-mechanical shutters can have faster shutter speeds, but still fall short of the ideal target. An illustrative example is the intensified charge-coupled device camera (ICCD) which, as the name implies, is an enhanced form of a CCD (charge-coupled device) detector that is found in many ordinary digital cameras. The ICCD can produce shutter speeds of a nanosecond ( seconds), but this still falls short of our required time resolution; furthermore, the maximum frame rate of the camera is on the order of 100 frames/second, clearly too slow to capture light in motion! It is worth looking briefly at the principle of the ICCD, however, as the fundamental component of the picosecond camera possesses many similarities.

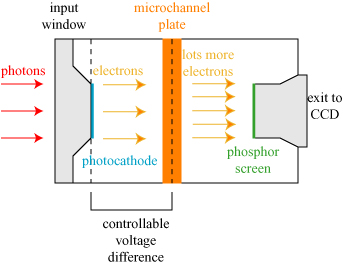

A simple schematic of an ICCD is shown below. Photons (particles of light) are incident upon an input window in the ICCD. They strike the photocathode, where they kick off a proportional amount of electrons; in this way, a beam of light is converted into an electrical current. These electrons strike a microchannel plate (MCP), which is a honeycomb of glass channels with a high electrical voltage across its width. The electrons bounce around in the microchannels, each releasing many more electrons in the process. The MCP therefore proportionally amplifies the electrical current incident upon it. Finally, this large stream of electrons is converted back into light at the phosphor screen, which glow when the electrons hit it. This light, which is proportional to the amount of light originally incident on the ICCD, is then channeled to be read out at an ordinary CCD sensor.

This might seem like a lot of wasted effort, as we have light coming in, and at the end of the device we have light going out to an ordinary CCD anyway! This system provides two significant advantages over a CCD alone, however. The first is signal enhancement: the microchannel plate multiplies the number of electrons, and hence the signal, received at the final CCD. This allows one to perform imaging even in low-light; in fact, this sort of configuration is used in night-vision technology. The other advantage is a very fast electrical shutter: a positive voltage difference can be applied between the photocathode and the MCP extremely quickly, resulting in a force on the electrons that keeps them from ever reaching the MCP. This voltage switch acts as the shutter for the camera, and can be “opened” and “shut” with nanosecond speeds.

It should be noted that these two advantages go hand-in-hand: when the “shutter” is open only for a nanosecond, very little light makes it into the camera. The signal enhancement becomes necessary to see anything when the shutter speeds are so fast.

The shutter speed is still not fast enough to capture an image of light motion, however. Furthermore, at 100 frames/second, the frame rate is inadequate to make a movie of light. This latter limitation comes from at least two sources. First, the phosphor screen that converts light back into electrons will continue to glow for microseconds after the electrons hit. One cannot take another image until the screen has “reset” and is no longer glowing from the previous image. Second, there is a time delay involved in the recording and storage of the information from the CCD at the end of the image. In order to make a movie of the motion of light we would, loosely speaking, need camera electronics that move at light speeds!*

There is a very different type of camera, however, that achieves a time resolution of roughly 2 picoseconds with a very different means of operation, and essentially takes images back-to-back: the streak camera. A simple schematic of a streak camera is shown below; it is worthwhile to compare it with the ICCD discussed above.

This system possesses essentially the same components as the ICCD, but it functions in a fundamentally different way. Photons (color-coded in order of arrival, blue-green-red) enter through a narrow horizontal slit and are converted into electrons at the photocathode. At a predetermined trigger (the start of the measurement), the voltage between the sweep electrodes is changed rapidly — it is “swept”. This changing voltage deflects electrons arriving at different times into different directions. These electrons are then multiplied by the MCP and converted back to light on the phosphor screen.

The net result? Photons arriving at different times at the sweep camera end up being recorded at different places on the phosphor screen! This is illustrated below; four pulses coming in at four different times (red arrives first, then blue, then purple, then yellow) get deflected to different heights on the screen.

The speed advantage of this is evident: we record different time information at different places on the screen, and therefore can get the space and time information “all at once”. The process is incredibly fast; a commercial Hammamatsu C5680 streak camera can record a “movie” of light with a 2 picosecond time resolution, which is exactly what we require to capture the motion of light!

This speed comes at a cost, however; because we are using the vertical direction to place light at different times, we can only image light through a narrow slit. In terms of an image of a tomato, each movie of the tomato can only image a horizontal row of pixels, as illustrated below:

The solution to this is simple enough in words, but quite tricky in practice: take a movie of light propagating over one “slice” of the tomato, then change the height of the tomato, and take a movie of light traveling over another “slice”. If the light source produces the same pulse of light every time, and the timing of the recording is exactly the same, one can then computationally stitch together all the “slices” to produce a complete movie of light traveling over the object.

The experiment itself used a titanium-sapphire (Ti:Sapphire) laser that can produce very regular pulses which are 50 femtoseconds in duration (50 millionths of a billionth of a second!). Each pulse gets divided at a beamsplitter, and part of the pulse is sent to a detector to trigger the camera, while the other part goes to illuminate the scene. In the initial conference proceeding describing the research, the different slices were produced by raising/lowering the object; it seems that more recent experiments** use tilting mirrors to virtually raise and lower the scene from the camera’s perspective. A simple schematic (adapted from the conference proceeding) is shown below.

The laser pulse passes through a pinhole before illuminating the scene; this results in a spherical wave illuminating the object instead of a highly directional laser beam.

As we noted previously, the amount of light arriving at the camera is quite small, due in large part to the time resolution: very little light goes by in 2 picoseconds! To compensate for this, 100 movies were taken for each “slice” of the object; the final movie is therefore not only a combination of multiple “slices” of the object, but is also an average over many movies for each slice.

Nevertheless, this camera is a wonderful achievement! It has potential application in medical imaging, as the propagation of light through complicated media such as human tissue is still not completely understood. Furthermore, the camera can be used to further our understanding of how light scatters off of objects and test our existing models of light scattering.

I like to think of this camera as fulfilling a dream first envisioned by Galileo (1564-1642) some 400 years ago. Galileo made one of the first serious attempts to measure the speed of light, by placing two people with lanterns on distant mountaintops. The first lantern-holder would open his lantern, the second would open his on seeing the signal of the first, and the first holder could measure the round-trip time and deduce the speed. This attempt failed due to the incredible speed of light, but now we can build cameras that can capture the motion of light and slow it to a crawl!

********************

*In 2009, another high-speed imaging technique known as STEAM was developed that can take 6.1 million frames/second with a shutter speed of a half-nanosecond ( seconds) by the use of a specialized infrared laser source, but this too is too slow to really capture the motion of light.

** A YouTube video by the experimental group is embedded below. It is a nice video, though I take some issue with the description of “visualizing photons”. The camera is not in any way imaging individual photons.

***********************

Andreas Velten, Moungi Bawendi, & Ramesh Raskar (2011). Picosecond camera for time-of-flight imaging Imaging Systems Applications, OSA Technical Digest

Great article! If only more popular science writing could be this informative.

One thing, you mention that the fastest DSLR speeds are around 60 microseconds, or 60 millionths of a second. It seems to me that light will then move a distance on the order of meters, not 11 miles. Should the shutter speed have been milliseconds instead of microseconds?

Hmm… I think I got it right in my post! Light moves 1 foot in a billionth of a second; this means that it moves 1000 feet in a millionth of a second. and in 60 millionths of a second, or 60 microseconds, it moves 60,000 feet, and since 1 mile = 5280 feet, means about 11 miles. Light is really, really fast! 🙂

I agree, your write up was indeed very clear and informative. Much appreciated, thank you!

Thank you!

As you have beautiful explained in the post “Optical coherence tomography and the art world”, if you can capture the light pulse fast enough, you can do depth discrimination (we biologist call “optical sectioning”) similar to ultrasound. Recently one article[“Recovering Three-dimensional Shape Around a Corner using Ultrafast Time-of-Flight Imaging”] from their group appeared in Nature communications. Since the exposure time of their camera is nearly 2 picosecond, they claim depth discrimination ability of 400 µm.

I also found that light was imaged at 10 picosecond exposure time 40 years ago by Michel Duguay using optical Kerr effect. [Light photographed in flight, Am. Sci. 59, 551 (1971)]. You should look at the article. The image is so beautiful.

What an enlightening article (^_^)