About a week ago, NASA announced some really good and unusual news. The National Reconnaissance Office, in operation of the United States’ spy satellites, had some extra unused “hardware” to donate to the space agency: two Hubble-quality space telescopes, initially designed to spy on the Earth!

The Hubble Space Telescope, image via Wikipedia. Thanks to the NRO, NASA now essentially has two extra ones.

This is undoubtedly a great boon for NASA, which has been suffering under budget cuts for quite some time, even resulting in a recent bake sale by scientists. It will take years for the new telescopes to be used on a mission, but they could still be launched sooner, and for much cheaper, than comparable projects.

This raised an interesting question on Twitter, posed by Colin Schultz and John Rennie: if they had actually been used as spy satellites, what would these super telescopes have been able to see on the ground? It’s a fascinating question, and leads into a nice basic discussion of the optical resolution of imaging systems. In other words, what is the smallest detail that could be picked up by one of these telescopes in orbit?

As a starting point, it’s worth noting that there are typically a number of practical limitations to image-forming systems. The foremost of these are aberrations, which are distortions in the lenses or mirrors of the system that degrade the quality of the image. A familiar example of this is astigmatism, which also occurs in the human eye and is mitigated with corrective lenses. Because a mirror or a lens has to have a very precise curvature to produce an ideal image, bigger imaging optics tend to be more susceptible to aberrations. The Hubble telescope itself, after deployment in 1990, was found to have an unacceptable degree of spherical aberration; this was fortunately reduced by the use of corrective optics. (The telescope was, in essence, given eyeglasses!)

Even if we manage to make a system that is completely free of defects, it is still subject to a fundamental limitation: the wave nature of light. Waves of all kinds, including light, have a tendency to spread as they propagate. Even highly directional and narrow laser beams will eventually become quite wide; laser beams used to precisely measure the distance from the Earth to the Moon end up 1.5 km wide on the Moon’s surface!

When light is forced through a narrow aperture in an opaque screen, it spreads rapidly on the other side in a non-geometric manner, in a phenomenon known as diffraction. Roughly speaking, there is an inverse relationship between the size of the aperture and the rate at which the light spreads beyond it; this is illustrated in the pair of simulations below.

In both images, a light wave is normally incident from below onto an opaque screen (blue) with a hole in it. In the top picture, the hole is one wavelength wide, while on the bottom, the hole is three wavelengths wide. It can be seen that the light wave spreads rapidly upon emerging from the one wavelength hole, while the wave is spreading much less and is effectively traveling in a narrow column equal to the width of the aperture. The light emerging from the smaller hole spreads more, and vice versa.

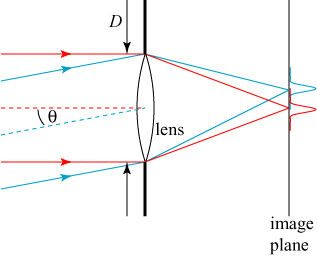

Diffraction also happens in imaging systems. The cylinder of a telescope “chops off” the edges of an incoming light wave, just like the aperture in the images shown above. Even if we forego putting our lens/mirror in a cylinder at all, the boundary of said lens/mirror chops off the light wave anyway. The light that gets focused onto our detector is already spreading as it is being focused.

This spreading results in a fundamental limitation to the performance of any imaging system. Let us suppose we focus our telescope onto a perfect point-like source of light that is some distance away. Ideally, we would like the image formed by our telescope to be a point of light as well, but instead we will find that the image of the point source is an extended circle of light, as illustrated below.

This spot, formed by the diffraction of light at a circular aperture, is known as an Airy disk. This spot consists of a central bright region surrounded by increasingly dimmer rings of light; this can be seen more clearly in a cross-sectional plot of the intensity, as shown below.

The size of the spot is inversely related to the size of the aperture: a larger aperture results in a smaller spot. It therefore follows that a good telescope will have a large an aperture as possible, to minimize the spot size. A large aperture also collects more light, allowing the imaging of fainter objects.

We are now in a position to talk about how diffraction limits the resolution of an imaging system. Suppose we are interested in imaging two perfect point sources of light (say, two distant stars) that are relatively close together. If the two points are sufficiently far away from one another, their individual diffraction spots will not overlap and we can clearly distinguish between, or resolve, the two independent sources.

If we imagine moving the two point sources closer together, we eventually reach a point where their diffraction spots overlap to an extent that they look more or less like a single object.

An image of two point sources that are separated by one half of the Rayleigh resolution limit (discussed below).

The natural question to ask: what is the closest distance we can put these two point sources and still distinguish them? The definition of this distance is somewhat subjective, but the physicist Lord Rayleigh came up with the most quoted criterion: two points are barely resolvable when the peak of one spot overlaps the first minimum of the second spot. This is shown below.

Rayleigh resolution criterion: two objects are barely resolved when the peak of one spot (blue here) corresponds to the first minimum of the other spot (red here).

The dashed line indicates the total intensity of the two spots together. An image of two Rayleigh-resolved spots would look as shown below.

Mathematically, the Rayleigh resolution criterion translates to the following equation:

.

In this equation, is the wavelength of the light,

is the diameter of the aperture, and

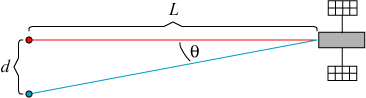

is the observed angular separation between the two objects. The latter two parameters are illustrated for a simple telescope below.

One more observation will be helpful. We’re really interested in the actual separation of objects that can be resolved, not the angular separation. A little trigonometry allows us to relate the angle to the object-image distance

and the spatial separation of the objects,

.

Mathematically, it follows that , but for large enough distances

, we may write

. Rayleigh’s resolution criterion then suggests that the minimum separation

at which two objects may be resolved is:

.

We can now ask the question: how good could the NRO’s “Hubble” satellites resolve objects on the Earth? The mirror diameter is 94 inches, or meters. Visible light has a wavelength of 390 to 750 nanometers; we’ll use

nanometers as an average value.

What do we choose for the height of the satellite? The article doesn’t say, but Wikipedia notes that many Earth observation satellites orbit at altitudes of 700-800 km, and “altitudes below 500-600 kilometers are in general avoided, though, because of the significant air-drag at such low altitudes making frequent orbit raising manoeuvres necessary.” Considering that the U.S. government typically doesn’t care about the cost and effort of things like orbit raising, we can boldly assume an altitude of 500 kilometers.

Putting these numbers together, we find that, according to the Rayleigh criterion, the NRO satellites could potentially resolve objects separated by a distance as small as

That is quite impressive — roughly speaking, two distinct objects on the ground only five inches apart from one another could be distinguished by the telescope!

It is a little harder to estimate what this would imply for distinguishing objects in a complex image like a photograph of the Earth’s surface. Roughly speaking, every point in such a complex image would be smeared out over a 5 inch diameter circle: on a 5 inch scale, objects would appear blurry.

A system whose primary limitation to resolution is known as a diffraction-limited system. The diffraction limit for the NRO satellites, according to the Rayleigh criterion, actually seems to fall short of what would be an ideal spy satellite application: identifying people on the ground by facial recognition. Is there any way to improve on the Rayleigh limit?

In fact, there is! It should be noted that Rayleigh introduced his criterion around the turn of the 20th century, when there were no computers available to analyze data and the best device available for recording images was the human eye. Let’s reiterate Rayleigh’s criterion: “two points are barely resolvable when the peak of one spot overlaps the first minimum of the second spot.”

Rayleigh chose a convenient definition for resolution, but it was in fact a rather arbitrary one! In fact, the English astronomer W.R. Dawes empirically determined a slightly narrower resolution limit than Rayleigh’s.

It is possible to do significantly better than even Dawes’ limit, however, with the advent of image processing software. Below is an intensity cross-section of a spot formed by two points separated by less than the Rayleigh criterion.

If we were to look at the total spot by eye, we would be unable to distinguish the individual contributions. But if we know what the image of an individual point source looks like, we could deduce that the total spot consists of two point sources.

The blurred image of an individual point source is known as the point spread function, because it represents how the light an individual point source spreads in the image. If we know the point spread function of an imaging device such as a telescope, we can perform a mathematical process known as deconvolution to “unblur” the image and in principle recover a perfect image!

The idea of deconvolution can be roughly understood from the preceding picture. Given the total intensity and the point spread function, we try and match our intensity function to a finite number of point spread functions of different heights and positions, added together. When we come up with a match, the positions of the point spread functions represent the positions of the original points.

In practice, we can only use deconvolution to improve the resolution of an optical imaging system to a small extent due to the presence of noise in the image. This noise could be inherent in the optical system or due to atmospheric turbulence. If I were to conservatively guess, I would say that we could improve the resolution by a factor of 2 over the Rayleigh criterion. This means that the telescope could potentially have a resolution of

This is quite impressive, and is quite close to a resolution where faces could be recognized. For those concerned about government surveillance, however, it is worth noting that it would be extremely difficult to improve the resolution further than this. The only two things we could change to reduce the Rayleigh limit are:

- Reduce the altitude of the satellite. As noted, this would be tough to achieve due to atmospheric drag.

- Increase the size of the aperture/telescope mirror. As mirror size increases, the difficulty of fabricating it correctly increases dramatically. Furthermore, a larger mirror (and telescope) becomes more difficult to fit into a launch vehicle to be put into orbit. The payload of the recently decomissioned space shuttles, for instance, was an area 4.6 meters by 18 meters.

Even if we could improve one of these parameters, the payoff would be limited. In order to halve the resolution limit by increasing the mirror diameter, we would have to double the mirror diameter. That would give it a diameter of 4.8 meters; assuming a length comparable to Hubble of 13.2 meters, it would be unable to fit in the space shuttle.

Perhaps the most telling observation is the fact that the NRO is giving away these satellites. One wonders if laboratory tests of the actual telescopes fell short of expectations; this does not seem like an unreasonable assumption.

Hopefully NASA will have better luck with them; at the very least, they’ve already provided a small scientific payoff by giving me an excuse to talk about image resolution!

To me, the important parameter is not resolution, it is time. How much processor time can the computers give to processing the images they collect? How much time can they give to processing the changes in the images they collect? How much time can the human handlers give to interpreting the changes that the processing computers identify?

There are many other factors limiting the spatial resolution of a spy sat.

A deep-space telescope, like Hubble, doesn’t worry about the atmosphere. Turbulence from the atmosphere is complex and dynamic, making it almost impossible to compensate. (Systems that require accurate distance/angle info, like the GPS, uses some models to compensate for the atmosphere effect. But this kind of adjustment doesn’t help much when it comes to the spatial resolution of a telescope. It only improves georeferencing.)

Spy telescopes aiming at the earth’s surface have come to the point where the identifiable target size is no larger that the scope itself (measured by aperture). When you go beyond this point, focusing becomes an important issue. The objects deep-space telescopes look for are stars or galaxies, which are much larger that whatever devices humans can build. Therefore, those scopes always focus at infinity. You still have to be really accurate to get it right. But once it’s done, it’s done. For spy satellites, the target distance varies, so the focusing must be done on-the-fly, which is much harder than locking at infinity. Atmosphere turbulence mentioned above makes it even more complex.

When a deep-space telescope looks at a dark part of the sky and looks for a far-far-far-away galaxy, it aims at the target, stabilize itself, and then spend minutes, hours or even days taking lots of shots to get the final high quality image. A spy sat has a very limited time window for a given mission. The optical sensor itself may be really powerful, but you’d better keep in mind that the space vehicle which supports the sensor moves at a crazy speed of 6.5-7.8km/s. The higher resolution you need, the more likely this speed is going to get in the way. In order to maintain image quality, you need to rotate the scope precisely, so the outcome also depends on the satellite’s attitude control. Even if the attitude control is good, you still have very limited time to take a shot, and quick shots lead to bad quality.

When you place a satellite somewhere very close to the earth’s surface, the device will move very fast, and the shadow of earth will have sharp edges through the orbit. This means a spy satellite has to switch between the shadow and the sunlight very quickly, resulting faster temperature change compared to other satellites. While this may be nothing for some other types of satellites in the modern era, it can be a disaster for a huge and precise optical device.

Another problem for spy satellites is they are built for political/military purposes and therefore face military threat. A large satellite traveling in low earth orbit is easy to kill when a war begins, so you may want a smaller and less eye-catching one.

Taking all the above into consideration, we’re probably pretty close to the limit already. Unless the fundamental design of those systems change dramatically, there seems to be no reason to expect significant improvement of spatial resolution in the near future.

Given that the earth, at least in daytime, is pretty bright and computers are pretty cheap, could you gang two telescopes a distance apart to get a higher resolution. I know they do this is in the radio bands and with the optical Keck scopes on Mauna Kea. (Yes, this would take a space shuttle type mission to do, but the payoff could be high for a spy satellite.)

It’s an interesting idea, but my understanding is that a pair of optical telescopes can be used easily for interferometry, but not so easily for image enhancement. Not exactly my area of expertise, but it’s easier to upscale radio astronomy with multiple telescopes because of the much lower frequency of the signal and the relative ease of measuring the phase of the signal. In essence, in order to use multiple telescopes as a huge virtual aperture, you need to be able to connect the relative phases of the independent telescopes, as well as their images.

Is the size of the pixels on the National Reconnaissance Office’s donated telescope known?

There’s a fun article that describes how you can easily calculate the angular resolution of a camera / telescope if you only know the pixel size and the focal length.

http://cosmologyscience.com/cosblog/cosmic-microwave-angular-resolution-surprise/

The pixel size of the sensor on the NRO satellites isn’t known, or at least isn’t given in the article I read! The pixel size is, in effect, a practical limit on the telescope resolution, where the Rayleigh limit is a physical limit. In this day and age, it’s not in general a problem to design an optical system where pixel limitations are not a problem, but Rayleigh can’t be easily overcome.

Actually, I wouldn’t be surprised if the reason NRO is retiring these satellites isn’t that they failed to perform up to expectation, but rather that the increasing performance and ease of deployment of aerial drones rendered them obsolete. Drones might be smaller, but they obviously also get far closer to their targets than the satellites could.

Given their mission shift from spying on foreign superpowers (where airspace issues give a huge advantage to satellites) to spying on small groups of armed insurgents and terrorists, it’s not hard to see satellites like these being perceived as giving too small a return on investment vs. drone aircraft.

A very good point! I had thought about the same point after writing the post, but didn’t update it. Since we’re even happy to send high-altitude drones over foreign airspace (like Iran) these days, it would make sense that the surveillance emphasis would shift to drones, which can obtain superior resolution with their much lower altitude.

I would venture a guess that a telescope could be made using aperture synthesis. That would take the burden of individual optical element size. An optical version of http://en.wikipedia.org/wiki/Shuttle_Radar_Topography_Mission

As I noted in another comment, my impression is that aperture synthesis is difficult in the optical regime due to the difficulty of controlling phase effects. Synthetic aperture radar is also an active sensing system, where the system serves as both source and detector. This isn’t to say that I think it is necessarily impossible, but it would be pretty difficult!

Accrodinging to this webiste http://www.spacetelescope.org/about/faq/

Hubble has resolution of 30 cm if it is looking at Earth.

The pixel size of wide field camera 3 on Hubble is 0.04 arcsec and field of view of 160*160 arcsec.

http://www.nasa.gov/mission_pages/hubble/servicing/SM4/main/WFC3_FS_HTML.html

Can anyone tell me what is the size of that sensor in milimeter or meters? As seen from the picture, it is quite big.

Curiously, the “spacetelescope” site gives a resolution roughly double of what my calculation and Wikipedia suggest. This could be a simple mistake on their part, or may just be an illustration of how different people can choose different standards for resolution!

Hi there! Being an amateur photographer fan, this kind of articles are really awesome to understand optics some ranks above the ones on camera lenses so that, applying some of these contents, we can improove our work.

Great job and keep them coming! 😉

Thanks!

Please read “Being an amateur photography fan”!

Thanks again!

Best regards froms Portugal! 😉

Dr. Grunsfeld described the telescopes as “bits and pieces” in various stages of assembly, lacking a camera and other accouterments, like solar panels or pointing controls, of a spacecraft.

Pingback: A U.S. Spy Agency’s Leftover, Hubble-Sized Satellite Could Be on Its Way to Mars | Smart News

Pingback: A U.S. Spy Agency’s Leftover, Hubble-Sized Satellite Could Be on Its Way to Mars | GreenEnergy4.us

Couple of ways to beat your 2.5inch resolution:

1) image in UV. Let’s say you can work at 100nm. That may be pushing it as mirror coatings may not be reflective at this wavelength but hey let’s assume an unlimited budget for exotic coatings and making the mirror surface accuracy to incredible precision!). That increases linear resolution by a factor of 5 over the 500nm you assumed. That’s a factor of 25x more detail.

2) you put he satellite in a ‘swooping’ orbit. Which is what they do with spy satellites. This means they swoop to 250km, skimming the top of the atmosphere, and then sail out to 1,000km so the average height is at a safe distance to avoid dragging them back to earth too soon. That’s another factor of 2 in linear resolution.

So, UV swooping spy sat can now resolve to 0.25inch. Face recognition should be easy 🙂

Oh and that’s 100x more detail ‘by area’.